AI Primitives vs. Frameworks: Choosing the Right Foundation for Secure, Scalable AI Agents

As AI agents become central to cybersecurity and enterprise automation, developers and security professionals face a crucial architectural question: should you build with low-level AI primitives or leverage high-level frameworks like CrewAI, AutoGen, or LangGraph? The answer is not always clear-cut. Each approach brings distinct advantages and trade-offs, especially when applied to sensitive domains like AI-powered security tooling.

This post aims to provide a balanced, in-depth analysis of both strategies, using the example of AI security tools to ground the discussion. Whether you’re building your first prototype or scaling to millions of agent runs, understanding these options can help you make more informed, future-proof decisions.

What Are AI Primitives and Frameworks?

At their core, AI primitives are the fundamental, composable building blocks of an AI system. They include components such as memory stores, context managers, tool APIs, and vector databases. Primitives offer granular control over system architecture, data flow, and performance. Much like cryptographic primitives in security, they are simple, flexible, and can be assembled in countless ways to meet unique requirements.

AI frameworks, in contrast, are higher-level abstractions that bundle together common patterns, workflows, and integrations. Frameworks like CrewAI, AutoGen, and LangGraph provide ready-made modules for memory, orchestration, and external tool integration. They aim to accelerate development, standardize best practices, and reduce the need to “reinvent the wheel.”

AI Security Tools: A Practical Comparison

Consider the challenge of building an AI-powered threat detection agent. With primitives, you might directly connect your agent to a vector database for log storage, implement custom context management, and wire up APIs for querying threat intelligence feeds. This approach allows for fine-tuned optimization, rigorous security controls, and rapid adaptation to new attack vectors or compliance mandates. You have the freedom—and responsibility—to audit every component and data flow.

Using a framework like CrewAI, you benefit from pre-built connectors, memory modules, and orchestration logic. This can dramatically speed up prototyping and initial deployment, especially for teams with limited AI engineering resources. Frameworks often encapsulate industry best practices, reducing the risk of architectural mistakes. However, their abstractions may limit your ability to customize workflows, enforce bespoke security policies, or quickly respond to emerging threats.

Strengths and Limitations: A Deeper Look

Primitives excel in scenarios requiring maximum flexibility, transparency, and performance. They are well-suited for production systems that must scale, adapt to rapidly evolving LLM APIs, or meet stringent security requirements. However, building with primitives demands significant expertise and effort. Teams may need to implement features that frameworks provide out-of-the-box, increasing development time and complexity.

Frameworks shine when speed and ease of development are paramount. They are ideal for rapid prototyping, standardized workflows, and environments where integration breadth outweighs the need for custom optimization. Yet, frameworks can introduce overhead, obscure internal logic, and lag behind the latest advances in LLM capabilities or security standards. Over time, their abstractions may become a source of technical debt or operational bottlenecks.

When to Use Primitives, When to Use Frameworks?

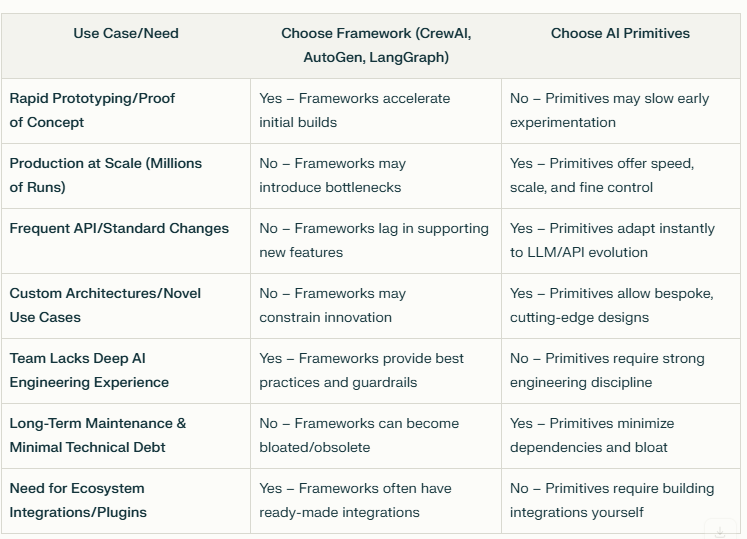

There is no universal answer. The best choice depends on your project’s maturity, threat landscape, team expertise, and long-term goals.

Early-stage projects or teams new to AI may benefit from frameworks’ guardrails and rapid development cycles.

Production-scale, high-security environments may require the transparency, control, and adaptability that only primitives can provide.

Hybrid approaches are also possible: some teams start with frameworks for prototyping, then gradually replace components with primitives as needs evolve.

See the table below:

As AI technology and security threats continue to evolve, the trade-offs between primitives and frameworks will only become more nuanced. What has your experience been? Have you encountered challenges migrating from frameworks to primitives, or vice versa? How do you balance the need for rapid innovation with the demands of security and scale?

I invite you to share your perspectives, questions, and stories in the comments. Let’s build a deeper, more practical understanding of how to architect the next generation of secure, scalable AI agents—together.

If you found this analysis useful, consider subscribing for more discussions on AI engineering and security. Your insights and experiences are always welcome!

You may also want to pre-order this book which will go deep into many aspects of Agentic AI

https://www.amazon.com/Agentic-AI-Theories-Practices-Progress/dp/3031900251