The Agentic Ecosystem Security Gap: What 500 CISOs Just Told Us About the Breach You Haven’t Had Yet

Recently I read a very interesting article shared by Elias Terman from Vorlon.

I decided to write a substack post to give an in-depth analysis of this article titled Vorlon’s Agentic Ecosystem Security Gap: 2026 CISO Report and what it means for every organization deploying AI agents today.

Let me start with a number that should stop you cold: 99.4%.

Vorlon commissioned an independent survey of 500 U.S. enterprise CISOs, conducted by Consensuwide between January and February 2026. The percentage of organizations that experienced at least one SaaS or AI ecosystem security incident in 2025 was 99.4%.

That’s right. Only 3 of 500 companies reported zero incidents. Every organization, regardless of industry, company size, or security spending, was breached through its SaaS and AI ecosystem last year.

And yet, those same organizations were running an average of 13 dedicated security tools.

This is the central paradox of the Agentic Ecosystem Security Gap 2026 CISO Report, and it is one of the most important documents published in the agentic AI security space this year. I want to walk you through what the data actually says, map it against the threat landscape I have been tracking through the OWASP Agentic Skills Top 10 (AST10) and the MAESTRO framework, and give you the mental model I believe explains it all.

The Architecture of the Problem

The Vorlon report opens with a framing that I have been trying to articulate for two years. The agentic ecosystem is not a product category or a deployment model. It is a single, interconnected system of SaaS applications, AI agents, API integrations, non-human identities, and the sensitive data that flows between all of them. It expands every time an employee connects a new AI tool, every time a SaaS vendor adds an integration, every time an agent is granted permissions to act.

The security problem is that no one is watching the interconnected system. They are watching the individual pieces.

Most enterprise security stacks were designed to manage individual applications for configurations, login events, and permission settings, app by app. That made sense when humans were the primary actors and risk lived inside discrete systems. It doesn’t hold when data is continuously moving between systems, carried by agents that operate autonomously. From Okta to Slack to GitHub to DocuSign: Each system logs its own slice of the interaction, but none of them sees the full sequence.

The biggest risk is not inside your applications. It’s in the connections between them.

This is what enterprise AI deployment already looks like at scale.

Year One: AI Agents Are Already a Breach Vector

Gartner named agentic AI oversight the number-one cybersecurity trend for 2026. That designation should be read not as a prediction but as a confirmation. Vorlon’s survey data confirms why:

75.4% of CISOs say AI agents are a critical or significant security risk

30.4% experienced suspicious AI agent activity in 2025

30.8% experienced unauthorized data exfiltration through SaaS-to-AI integrations

We are in Year One of serious enterprise AI deployment, and one in three organizations has already had a security incident involving AI agents. AI agent security incidents occurred at roughly the same rate as social engineering via SaaS attacks (33.6%) and SaaS supply chain attacks (30%). We do not treat social engineering as a tail risk. We should not treat an AI agent compromise as one either.

The Confidence Gap That Is Actually a Visibility Gap

Here is where the Vorlon’s report gets genuinely unsettling, and where I believe the most important insight lives.

The survey reveals a set of contradictions that expose something more troubling than a simple confidence gap. CISOs are not just overestimating their protection. They are claiming capabilities and experiencing outcomes that cannot simultaneously be true.

89.2% claim strong or comprehensive OAuth token governance. Yet 27.4% were breached through compromised OAuth tokens or API keys

78.6% claim a comprehensive, real-time data flow map across SaaS and AI, Yet 86.8% say they cannot see what data AI tools are exchanging with SaaS applications

77% claim comprehensive behavioral monitoring with data-layer context. Yet 30.8% experienced unauthorized SaaS-to-AI data exfiltration

The report’s diagnosis is precise and I think correct. Configuration audits look like monitoring. Permission reviews look like governance. Single-application detection looks like ecosystem visibility. The tools are not lying about what they do. They are doing exactly what they were designed to do. The problem is that they were not designed for the threat they are now being asked to address.

This is not a reflection on individual security leaders. It is evidence that having multiple categories of tooling creates the appearance of coverage without delivering the substance of it.

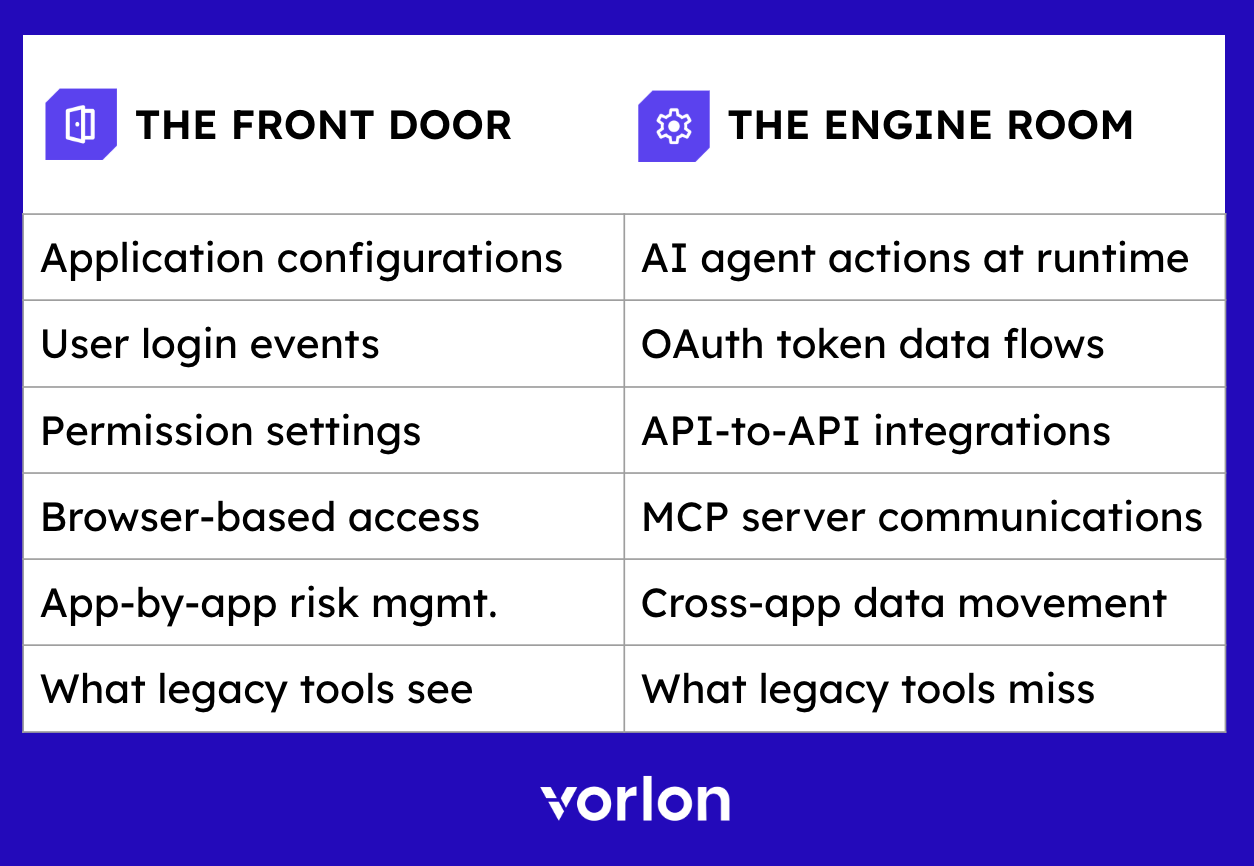

The Front Door vs. the Engine Room

Vorlon introduces the distinction between the front door and the engine room.

The tools most enterprises rely on were designed to monitor the front door, including application configurations, user login events, and permission settings. These were built for human users, browser-based access, and application-by-application risk.

The attack surface has moved to the engine room, which is the runtime layer where AI agents move sensitive data between systems, where OAuth tokens grant persistent cross-platform access, and where a single compromised integration can cascade silently across an entire SaaS and AI supply chain.

What legacy tools miss are AI agent actions at runtime, OAuth token data flows, API-to-API integrations, MCP server communications, and cross-app data movement.

This maps directly to a core problem in the MAESTRO framework’s Layer 3 (Agent Memory and Context) and Layer 4 (Agent Execution and Action). The risk is not at the policy level. The risk is at the execution level. An agent that has been granted legitimate access can still act maliciously, erroneously, or out of scope. Current tools have no visibility into what it actually does with that access.

SSPM and SASE: Legitimate Investments, Wrong Problem

The Vorlon report provides measured but clear treatment of the two categories most often presented as the answer: SSPM (SaaS Security Posture Management) and SSE/SASE (Security Service Edge/Secure Access Service Edge).

Both are legitimate investments. Neither was built to see what happens in the engine room.

SSPM audits which permissions exist, not what agents do with them at runtime. SSE/SASE monitors what traverses the network perimeter, not API-to-API data flows that bypass the network entirely. Thirty-nine percent of organizations use an SSPM tool. Of those, 42.8% say it only detects within individual applications or primarily functions as a configuration and compliance audit tool, not as a real-time cross-platform threat detection tool.

This is a critical distinction that I see conflated constantly in enterprise security conversations. Posture management tells you what your configuration looks like at a point in time. It tells you nothing about what an AI agent did with that configuration between snapshots.

The Structural Limitation: 83–87% Across Every Capability

When CISOs were asked to rate their current tooling across 11 limitation categories, every single factor was rated as having some level of limitation by 83–87%. The range spans only four percentage points. This statistical compression is important. This is not evidence that some tools are better than others in certain areas. It is evidence that the entire existing architecture shares the same structural deficiencies.

The top limitations reported:

Cannot see sensitive data flows across applications: 87%

Cannot see what data AI tools are exchanging with SaaS apps: 86.8%

Focus on configuration and compliance, not runtime threats: 86.2%

Too many siloed tools, no unified view: 85.8%

Lack behavioral analytics and anomaly detection: 85.8%

Cannot distinguish human from non-human behaviors: 83.4%

That last one is particularly significant from an agentic AI security perspective. The inability to distinguish human from non-human behavior is the foundational gap that makes every other limitation worse. If you cannot tell whether a human or an agent took an action, you cannot apply appropriate controls, you cannot build accurate behavioral baselines, and you cannot detect anomalous agent behavior against those baselines.

This is exactly the problem that the Proof of Control (PoC) framework is designed to address at the cryptographic identity layer. Still, most enterprises are not yet operating at that level of control assurance.

No Consensus on Who Owns the Breach

One data point in the Vorlon report that I have not seen discussed enough is that when a SaaS vendor announces a breach, there is no industry consensus on who owns the impact assessment. Responses span nine organizational functions with no single team cited by more than 21.8%.

SaaS security team: 21.8%

IT security leadership: 13.8%

Data security: 11.6%

IT operations: 10.6%

Security operations: 10.6%

Risk and compliance: 9.8%

Cloud security: 9.2%

Security engineering: 7.2%

Application owner: 5.2%

No defined owner: 0.2%

This is a governance gap as much as a technical gap. The agentic ecosystem has not yet found a settled home in the enterprise security organization. In many cases, no one owns it, which means nobody is building the muscle memory to respond to it. When the incident happens (and based on the 99.4% figure, the question is not whether but when) the response will be improvised.

48.8% of organizations would rely on manual response to an active SaaS exfiltration. Manual response. Human-speed processes against API-speed breach cascades.

Supply Chain Risk: 99.2% Concerned, 0.8% Protected

The Vorlon report documents the 2025 supply chain incidents that are now part of enterprise security lore: the Salesforce ShinyHunters vishing attack, the Salesloft/Drift OAuth hijack, and the Gainsight supply chain compromise. Following these events, 99.2% of CISOs report concern about a similar incident in 2026. Only 0.8% feel adequately protected against one.

This is a 123.5-to-1 ratio of concern to confidence. I am not aware of any other risk category in enterprise security that produces this kind of gap.

30% already experienced a supply chain attack in 2025. 46.6% call it a top priority risk. Only 51.2% have an automated IR playbook for active SaaS exfiltration.

The supply chain attack vector is particularly dangerous in the agentic context because the blast radius calculation is so difficult. When a SaaS vendor is compromised, every organization that had AI agents operating through that vendor’s API is potentially exposed. Not just for the data that traversed that API, but for every downstream system those agents were also authorized to access. One compromised integration node can become the entry point for a cascading multi-system breach that is nearly impossible to reconstruct from siloed logs.

The AI and SaaS SecOps Coverage Gap

Security operations teams have spent years building mature workflows for endpoints and physical infrastructure. That investment has not extended to the SaaS and AI ecosystem.

Fewer than half of CISOs reach comprehensive coverage in any of the three core SecOps workflow areas. For exposure management, comprehensive coverage sits at 41.8%. For threat hunting and investigation, 44%. For incident response, 38.2%.

The good news, and there is genuine good news here, is that 93%+ plan to add or expand coverage across all three, with nearly half intending to do so within 12 months. Budget is following intent: 86.8% plan to increase SaaS security budgets in 2026, and 84.2% plan to increase AI security budgets.

The risk is that this investment goes into the same tool categories that produced the current coverage gaps. The Vorlon report makes this point directly: 99.4% were breached despite running an average of 13 dedicated security tools. More tools in the same categories will produce the same results.

What Agentic AI Ecosystem-Layer Security Actually Requires

The report’s final section articulates what organizations genuinely need to close the gap. I want to map this against what I have been developing in the context of the OWASP and MAESTRO frameworks, as the alignment is strong.

Continuous discovery across all SaaS apps, AI agents, integrations, and non-human identities, including shadow AI and shadow integrations. In the MAESTRO model, this maps to the asset inventory requirement at Layer 2 (Data Operations and Storage). You cannot protect what you cannot see.

Cross-app data flow mapping showing how sensitive data moves between every SaaS app, AI tool, and integration in near real time. This is the technical capability that most organizations report lacking. It requires instrumentation at the API and integration layer, not just at the application configuration layer.

Behavioral monitoring for human and non-human identities, with data-layer context that distinguishes compromised agents from normal operation. This is the non-human identity problem at its core. Agent behavior needs its own baseline, its own anomaly detection model, and its own alert taxonomy, distinct from the human user behavioral analytics that current UEBA tools are built around.

AI Agent Flight Recorder for a forensically complete, cross-SaaS audit trail of every agent action, mapped to sensitive data and blast radius. This is the Proof of Control requirement articulated from the incident response direction. If you cannot reconstruct what an agent did across every system it touched, you cannot determine the blast radius of a compromise, nor can you provide the board-level accountability now being demanded.

Blast radius calculation answering the board-level question in minutes: which data, which systems, which identities are at risk. This is what every CISO will be asked when a breach is discovered. The organizations that can answer it in minutes rather than weeks will have a fundamentally different post-incident posture.

Cross-app coordinated response with native SecOps integration across exposure management, threat hunting, and incident response. This closes the ownership gap. When the blast radius is understood and the affected systems are identified, the response needs to be orchestrated across the entire ecosystem simultaneously. Not executed application by application by whoever happens to own each one.

The Deeper Implication: This Is a Structural Problem

I want to close with the interpretation that Vorlon’s survey gestures toward, but does not fully state.

The reason 99.4% of organizations were breached through their SaaS and AI ecosystem is not that they made bad vendor choices, hired weak security teams, or underinvested in tooling. They were breached because the security architecture that worked for the human-speed, browser-based, application-centric enterprise does not work for the machine-speed, API-native, multi-agent enterprise that 2025 brought into existence.

This is a structural transition, not a maturity gap. The organizations that recognize this and build security operations that cover the engine room, not just the front door, will be the ones that avoid the next wave of AI-era breaches.

The organizations that do not will spend 2026 adding their fourteenth dedicated security tool and remaining among the 99.4%.

The agentic workforce is here. The security architecture to govern it is not. That gap is where breaches live, and Vorlon’s Agentic Ecosystem Security Gap 2026 CISO Report is the most precise quantification of it I have seen published. It deserves careful reading by every security leader deploying AI agents today, which, as of 2026, means nearly every security leader.

FAQs

Q: What is an agentic ecosystem?

An agentic ecosystem is the combined layer of SaaS applications, AI agents, third-party integrations, non-human identities, and the data flows connecting them. Unlike traditional IT infrastructure, this layer operates largely autonomously — AI agents call APIs directly, chain actions across applications, and move sensitive data without a human in the loop. Most security tools were built before this layer existed and have no visibility into it. Vorlon’s Agentic Ecosystem Security Platform was purpose-built to secure this layer — mapping every app, agent, identity, and data flow across 1,000+ connected services.

Q: What is the biggest cybersecurity gap facing CISOs in 2026?

According to Vorlon’s 2026 CISO Report — a survey of 500 U.S. enterprise CISOs — the biggest gap is the disconnect between perceived security coverage and actual runtime visibility. 89.2% of CISOs claim strong OAuth governance, yet 27.4% were breached via OAuth or API keys. 78.6% claim they have data flow maps, yet 86.8% cannot see what data AI tools exchange with SaaS applications. Organizations averaged 13 dedicated security tools in 2025 — and 99.4% still experienced at least one SaaS or AI ecosystem security incident.

Q: Why are existing security tools failing to protect AI agents?

Most security tools — including SSPMs and SASE platforms — were built for the “front door” of enterprise security: app configurations, login events, and human user permissions. AI agents operate in the engine room: the runtime layer of OAuth tokens, API-to-API calls, MCP server communications, and autonomous data movement. SSPMs audit static permissions within individual apps; SASE monitors the network perimeter. Neither was built to monitor what AI agents actually do after access is granted. 87% of CISOs in Vorlon’s 2026 report say they cannot see sensitive data flows across applications. Vorlon addresses this directly by monitoring 100% of backend API traffic — including SaaS-to-SaaS and agent-to-SaaS flows — without agents, proxies, or inline inspection.

Q: What is a SaaS supply chain attack?

A SaaS supply chain attack occurs when an attacker compromises a third-party SaaS application or integration connected to your environment and uses that foothold to access your data or systems. Real examples from 2025 include the Salesforce ShinyHunters vishing attack, the Salesloft/Drift OAuth hijack, and the Gainsight compromise. Because SaaS applications are deeply integrated through APIs and OAuth tokens, a breach at one vendor can propagate rapidly across an entire ecosystem. 99.2% of CISOs in Vorlon’s 2026 report are concerned about a SaaS or AI supply chain breach in 2026 — yet only 0.8% feel adequately protected. Vorlon detects supply chain compromise at the integration layer in real time, before the blast radius spreads.

Q: What is a non-human identity and why is it a security risk?

A non-human identity (NHI) is any identity not associated with a human user — including service accounts, API keys, OAuth tokens, bot accounts, and AI agents. These identities often have broad permissions, rarely rotate credentials, and operate entirely outside the visibility of traditional identity governance tools. 83.4% of CISOs in Vorlon’s 2026 report say their tools cannot distinguish between human and non-human identity behavior — meaning anomalous agent activity goes undetected. Vorlon maintains a complete inventory of all non-human identities across the agentic ecosystem and monitors their behavior continuously against established baselines, flagging deviations that indicate compromise, abuse, or misconfiguration.

Q: What is an AI Agent Flight Recorder?

An AI Agent Flight Recorder is a forensically complete, immutable audit trail of every AI agent action — every API call, every data movement, every identity event — mapped to the sensitive data it touched and the downstream systems affected. It gives security teams the ability to reconstruct exactly what an agent did, what data it accessed, and where that data went — in minutes rather than days. Vorlon’s AI Agent Flight Recorder is the first purpose-built implementation of this capability, queryable in natural language via the Vorlon MCP Server and accepted as compliance evidence for SOC 2, HIPAA, GDPR, EU AI Act, NIS2, and DORA.

Q: What is blast radius in AI security?

Blast radius refers to the full scope of systems, data, and identities affected by a security incident involving an AI agent or compromised integration. Because AI agents operate across multiple SaaS applications simultaneously, a single compromised token or agent can propagate damage across an entire ecosystem rapidly. Mapping blast radius — identifying exactly which data is at risk, which identities are involved, and which downstream systems are affected — is one of the six capabilities Vorlon’s 2026 CISO Report identifies as critical for agentic ecosystem security. Vorlon calculates blast radius automatically for every security finding, giving teams the context needed to prioritize and respond precisely.

Q: What security capabilities do CISOs need to secure their agentic ecosystems?

Vorlon’s 2026 CISO Report identifies six required capabilities: continuous discovery of SaaS apps, AI agents, integrations, and non-human identities including shadow AI; near real-time data flow mapping across applications; behavioral monitoring that distinguishes human from non-human identity activity; an AI Agent Flight Recorder providing a forensic cross-SaaS audit trail; blast radius calculation to rapidly identify at-risk data and systems; and coordinated SecOps response orchestrated across the entire ecosystem. Vorlon’s Agentic Ecosystem Security Platform delivers all six through its patented DataMatrix™ engine, which converts fragmented telemetry into a live model of every app, agent, identity, and data flow across 1,000+ connected services — with full ecosystem visibility established within 24 hours of deployment.

About Vorlon

Vorlon is the Agentic Ecosystem Security Platform that protects the converged SaaS and AI ecosystem where agents, APIs, integrations, and non-human identities operate at machine speed. Its patented DataMatrix™ technology maps how sensitive data, identities, and integrations interact across enterprise systems, giving security teams the visibility, forensics, and remediation to manage sensitive data exposure, prevent breaches, and deploy AI at scale.

https://vorlon.io

Thanks Vorlon for sponsoring this article, if you would like to have your sponsored article to reach to 84k email subscribers instantly via substack and have monthly view of 865,272, please reach out to info@distributedapps.ai

83.4% can't distinguish human from non-human identity behavior, and that number explains most of the breach surface you're describing. The agent isn't the weak point, the audit trail is. I run agents overnight against local tools and external APIs, and the logging I trust most is what I built myself: append-only JSONL files per session, chain IDs threaded through every tool call. Not because built-in tooling is bad but because I need to answer "what did it do at 3am" without hunting across five dashboards. The visibility paradox (89% claim OAuth governance, 27% got breached anyway) reads like the classic gap between policy docs and runtime behavior. Static configs don't catch machine-speed flows.